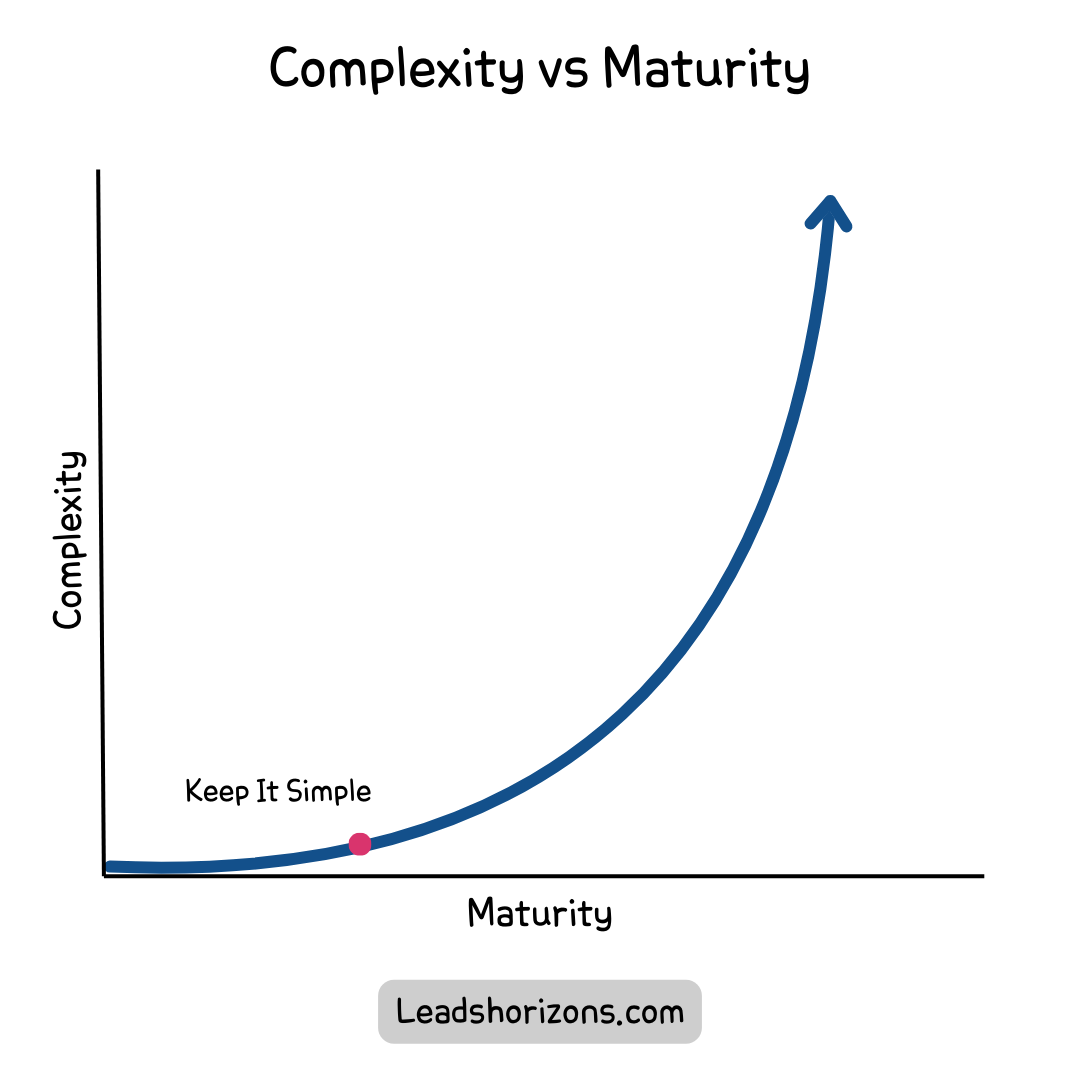

What is your stand on the location where you work from? Work location seems to have become a complex subject for companies and employees in the last year. With an imbalance in the demand for local and remote work, the issue merits discussion.

![Employers Offer Fewer Fully Remote Jobs and More Fully Onsite Jobs Than Employees Want — WFH Research [1]](https://prod-files-secure.s3.us-west-2.amazonaws.com/df75203e-cd58-41eb-8339-d5bf4288eb0e/d0c68370-26e8-43d4-b883-eeef626c5dbd/Untitled.png?X-Amz-Algorithm=AWS4-HMAC-SHA256&X-Amz-Content-Sha256=UNSIGNED-PAYLOAD&X-Amz-Credential=ASIAZI2LB466VDEZN2VN%2F20250731%2Fus-west-2%2Fs3%2Faws4_request&X-Amz-Date=20250731T115508Z&X-Amz-Expires=3600&X-Amz-Security-Token=IQoJb3JpZ2luX2VjEKv%2F%2F%2F%2F%2F%2F%2F%2F%2F%2FwEaCXVzLXdlc3QtMiJHMEUCIQCjVYjb9rSrpWkUr7iEb8bbxZjW16KWHGk%2Fm88R5vJXvgIgcYyJNmfnim4I5g26Y4VkLIbEHDFdGVf%2FXDspRzHzCD4qiAQI1P%2F%2F%2F%2F%2F%2F%2F%2F%2F%2FARAAGgw2Mzc0MjMxODM4MDUiDH1%2Bb19iT5qs%2B9DzSCrcAwdA%2BJZyJCGX6xqRvQWM1aTVyuQJz5LSVdiKVimCN8hikLOJwonDw64dbQabhsMylDeiAoFvMzZNV9DiL72d3E2wEfkyf5%2BNBhtqgUHmHo%2Ba6qU4CHBBfMX%2Broa58UV87GizFO%2Bc3FaGqvnAJ3ACBtmroJH4rRZTj7MrSrqo3SiYREafsfkT%2BGvjkc81K92ftjRLCXL9logjM%2F0y0b1uoZpVE1VuJvWWDd7%2BjD%2BSgCEcHet3OsdX6Io04QpqIIbRTe1WxA0Qf6%2B99ZPKO1ApGWug4ufK%2BCOdyCfXfBAdE5XIOZ%2FIu824s4QyeaDiMSI5cS7a%2F1%2FZ8pqoyUOlW3n5rb%2FbuvGJBS3RHuO1yyAMIpLVKKt%2FiMO1Wq%2FiEtlrucxyArh9cvqZMf7RqQQDleeHmhmKmWZuU9z8tllivS16hFFoQ%2F6eO65VDqMXwPh6Cb8bogAFf%2BVAr%2FDeiq5fCU6mpbee8MDg93WqsvDR%2Fu83IfksHHUJFeeFGwRKWfwSHmp0wdrqliah4Zzt65ywE%2B%2BJR8K3nKEu9N2RQ1NQTTlvm3G2Irdgnnhi6QRQVKpTVrh9IbdD3WpF7ksFW0lPW6lz9Ft87gbVFF4uVjL3cO3rMA2U%2FhmPT%2B%2Fa34jGqDLkMO2crcQGOqUBZL%2BGi7IDopGrNBqi27qsZbI1nIC0o%2BnxMNrGs5Zs39BvborYjD1lJTZDHMRPU0MLZYGUBeNZpwhcv%2BBxP2DzgciqMSeRvsrPPpIImjicC5Ic9%2FTlOKBsUOJUiZQ2XyKyVvD5bh2zSC1I9WknAuko2dSREbiuPSHWEVTrz9KSuuh1rgIfmuLzBXc1%2F1LQwvRSyhS27OcrbBxYtP48obRyXzshGj2y&X-Amz-Signature=4a7a781cfa6fdde9db1e9984e91aa3f532259e9f05fd1994d8402f993887a824&X-Amz-SignedHeaders=host&x-amz-checksum-mode=ENABLED&x-id=GetObject)

Reflecting on my professional journey, I have had the opportunity to explore various work modalities. From my early days in on-site environments, savoring technical discussions around me, to remote work across different regions, embracing the nomadic lifestyle and thriving in multicultural settings.

Let's delve into this topic further in the article.

Work Location in the past 5 Years

Let's address an important aspect first. Remote work isn't a novel concept that emerged during the COVID-19 pandemic. In fact, I've been working remotely from various locations including France, Spain, and the USA since 2016. The tools and infrastructure for remote work, however, have definitely evolved over time.

Before the pandemic, the majority of companies preferred on-site operations and invested heavily in office spaces to meet their employees' needs.

However, the pandemic essentially forced a large-scale social experiment in remote work. The majority of us had to adapt to working from home. Surprisingly, it turned out to be quite effective!

However, as the pandemic winds down, there's an increasing push from companies to revert to the pre-pandemic model. Interestingly, many employees are reluctant to give up the benefits of work-life balance they've experienced during this period (less commute, more family time, more personal time, etc).

The different modalities of Work

Before we delve further into the topic, let's look at the different work models.

- On-Site: This is the conventional work model where employees need to be physically present at a particular location, often an office, to do their work.

This means the employee needs to be in the same city as the company at all times.

- Hybrid: This flexible model blends on-site and remote work. Employees might work from home some days and be in the office on others.

This usually means the employee needs to be in the same city as the company.

Some subtypes include:

- Weekly days at the office: set days are designated as either on-site or remote.

- Flexible hybrid: the individual can choose the best place to work at any given moment, but there is a quota for on-site time.

- Remote: Here, employees work from outside the traditional office environment such as from home, a co-working space, or any other location with internet access.

This doesn't require the employee to be in the same city as the company.

Some subtypes include:

- Quarterly days: A certain quota needs to be met within a specific timeframe that enables the person to work remotely.

- On-demand travel: There are instances where travel is needed for significant interactions that require groups of people to collaborate.

- Full remote: unless it's necessary, the employee doesn't need to travel at any particular time.

As we can see, there are subtle differences that separate a Hybrid from a Remote work model. The deciding factor that differentiates one from the other is the employee's ability to be located in the same city or nearby.

Excuses to go back to the office

Some of the reasons that can be affecting the current situation:

- Distrust of Employees: Some companies might harbor concerns about the potential lack of productivity when employees work remotely. These concerns stem from the belief that without direct supervision, employees might be more prone to distractions or unproductive behaviors. Working from home introduces a complexity to the monitoring of employees that some companies dislike.

- Cultural Concerns: A strong, cohesive company culture is often considered a key factor in a business's success. Some companies believe that this culture can only be fostered through face-to-face interactions and shared experiences that come with working in the same physical space. They worry that remote work might dilute this culture, making it harder to maintain shared values, facilitate team bonding, and encourage a sense of belonging among employees.

- Performance Changes: The shift to remote work can bring about changes in employees' performance. This is a complex matter as employee performance does not have a single definition, and as we will see later in this article, it can be driven not only by the person but by their environment.

- Office Investments: Companies that have invested substantial amounts of money in their office spaces may feel compelled to make use of these investments. These investments could include not just the physical office space itself, but also equipment, furniture, and other amenities designed to make the office a comfortable, productive environment. The continued payment for office rents, maintenance, and utilities while the space remains largely unused can also be a motivating factor to return to the office.

- Investor Contributions: In an investment landscape, investors often diversify their investments as a risk management strategy. A significant portion of these investments may be allocated to real estate, directly or indirectly, through various investment funds. If the workforce shifts towards remote work, the demand for these office spaces could decrease, leading to potential implications on the return on investment for these real estate investments. Therefore, the trend towards remote work can have a broader impact on the investment community.

- Community Impact: The presence of office districts in cities and towns has resulted in the creation of numerous jobs in various sectors. The local economy in these districts frequently thrives on the regular influx of office-goers. This includes a wide range of businesses such as restaurants, cafés, fitness centers, and retail stores, all of which rely substantially on the footfall generated by employees working in nearby offices. These businesses can be significantly influenced if more people transition to remote work. As the footfall decreases, these businesses may struggle to sustain themselves, leading to potential job losses and broader socio-economic implications.

Debunking Concerns

While personal skepticism can influence views, performance assessments should be driven by solid data. With that in mind, let's delve into some of the available data:

One ground-breaking Stanford study on the subject, which included 16,000 participants, found a marked increase in remote employee output—even for employees who only worked from home a few days a week. During the study, the telecommuting group displayed a 13% average improvement in performance over the office-based control.

Source

While we possess this data, it's important to note that our employees' performance can fluctuate between different work modalities.

In both Remote and On-site work environments, everyone operates under the same modality, thus establishing a unified workflow. Conversely, the Hybrid mode can introduce complications. This is due to certain sub-categories that we've discussed, where some individuals are local and others are remote. Such a dynamic can inadvertently impact the performance of remote workers, not through any fault of their own, but due to potential exclusion or differential treatment.

Cultural Concerns

The cultural concern is normally embodied by the phrase:

There are things that happen in the office, that don't happen in remote

The concept here is that specific discussions occur naturally in a local setting. Hybrid work capitalizes on this by emphasizing the value of office days when such interactions can take place. However, a common concern among hybrid employees is that they spend a substantial portion of their office time on remote calls.

Conversely, effective communication and the right tools are crucial for remote work to ensure these interactions can be replicated. This is an area many companies are still struggling to address effectively.

Office Investments

Investments, particularly in real estate, are often expected to grow over time; hence they can be complex. It's essential to distinguish companies between two groups based on their ownership of the space:

- Owners: As outright owners of the physical space, this asset is a key component of their balance sheet. Naturally, they'll want to maximize its value, as it directly impacts their valuation.

- Renters: With temporary ownership, the space represents an ongoing cost that they need to manage. Minimizing this cost can significantly enhance their profit margins.

As you might expect, each group has a distinct focus. On-Site will likely appeal to Owners, while Remote will be more beneficial for Renters.

As you can see and

Addressing Remote Concerns: Make Remote Work

From our observations, the primary concern stems from the absence of a unified approach and a shared workspace.

As a company, to surmount these challenges, we can adhere to a few key principles:

Remote First

The guiding principle of this work model is:

If one person is remote, everyone is remote

This approach aims to treat all employees equally, irrespective of their location. Every process, ranging from meetings to casual conversations, should be designed keeping remote employees in mind. This method ensures that remote workers are not sidelined or disadvantaged compared to their on-site peers.

To this end, investing in the right collaborative tools is crucial so that everyone has the same access and capabilities. Here are some tools you might need:

-

Virtual White Boards: Most meetings involve some form of interaction that typically takes place on whiteboards in an office setting. If we use a physical whiteboard in conjunction with a conferencing tool, we risk limiting the involvement of remote participants. Therefore, having a virtual alternative is necessary. A good example of this is Miro, where you can invite people to collaborate live on various types of boards

-

Pairing Software: To work efficiently in pair or mob programming, we should simplify collaboration as if everyone were working on the same machine. Code with me from IntelliJ is an example of this.

https://www.youtube.com/watch?v=Lq0fCMCK-Yw

-

Collaborative Knowledge Base: It's important to have a centralized location for team and company information. However, many tools are rigid and lack concurrent editing capabilities. We need to ensure we choose the right tool for our needs. Personally, I've found Notion quite useful.

-

Collaborative Documents: Just like our knowledge base, we need the ability to concurrently edit documents like presentations and spreadsheets when preparing for presentations or budget cycles. Google and Microsoft offer robust products with the necessary features.

-

Async Communication Tools: All workers need their focus time, so having the right tools for asynchronous communication, including a chat application and traditional email, is essential.

The last three tools are not exclusively for remote work but are important for any work mode.

Virtual office

Much like a physical office promotes interaction and collaboration, a virtual office offers an online shared space for employees to engage, collaborate, and foster relationships.

This isn't merely about conferencing tools, but about the creation of social environments with virtual desks and communal areas. These tools enable employees to engage in organic conversations when close to another's virtual desk, relax in virtual coffee spaces, indulge in games, and more. Gather.town is a prime example of such a tool.

It's also worth noting the cost-effectiveness of virtual offices compared to traditional physical spaces.

video

Parting words

Remote work has the potential to be as effective as in-office work, offering employees better work-life balance without compromising the company culture that we hold dear. Like many challenges in our industry, successful remote work hinges on using the right tools. The traditional tools used for in-person work may not be suitable for this new dynamic.

To be candid, hybrid work models often fall short in numerous ways, often representing a compromise rather than an ideal solution. In my view, hybrid models are the most challenging to manage and their impacts are not always evident.

Given this, it's essential that we reassess the paths our companies are taking. We may be inadvertently hindering our own potential due to a few manageable concerns.

[1] https://wfhresearch.com/wp-content/uploads/2024/04/WFHResearch_updates_April2024.pdf

[2] https://qz.com/1627980/remote-work-can-boost-productivity-if-you-have-the-right-tools

![Employers Offer Fewer Fully Remote Jobs and More Fully Onsite Jobs Than Employees Want — WFH Research [1]](https://prod-files-secure.s3.us-west-2.amazonaws.com/df75203e-cd58-41eb-8339-d5bf4288eb0e/d0c68370-26e8-43d4-b883-eeef626c5dbd/Untitled.png?X-Amz-Algorithm=AWS4-HMAC-SHA256&X-Amz-Content-Sha256=UNSIGNED-PAYLOAD&X-Amz-Credential=ASIAZI2LB466VDEZN2VN%2F20250731%2Fus-west-2%2Fs3%2Faws4_request&X-Amz-Date=20250731T115508Z&X-Amz-Expires=3600&X-Amz-Security-Token=IQoJb3JpZ2luX2VjEKv%2F%2F%2F%2F%2F%2F%2F%2F%2F%2FwEaCXVzLXdlc3QtMiJHMEUCIQCjVYjb9rSrpWkUr7iEb8bbxZjW16KWHGk%2Fm88R5vJXvgIgcYyJNmfnim4I5g26Y4VkLIbEHDFdGVf%2FXDspRzHzCD4qiAQI1P%2F%2F%2F%2F%2F%2F%2F%2F%2F%2FARAAGgw2Mzc0MjMxODM4MDUiDH1%2Bb19iT5qs%2B9DzSCrcAwdA%2BJZyJCGX6xqRvQWM1aTVyuQJz5LSVdiKVimCN8hikLOJwonDw64dbQabhsMylDeiAoFvMzZNV9DiL72d3E2wEfkyf5%2BNBhtqgUHmHo%2Ba6qU4CHBBfMX%2Broa58UV87GizFO%2Bc3FaGqvnAJ3ACBtmroJH4rRZTj7MrSrqo3SiYREafsfkT%2BGvjkc81K92ftjRLCXL9logjM%2F0y0b1uoZpVE1VuJvWWDd7%2BjD%2BSgCEcHet3OsdX6Io04QpqIIbRTe1WxA0Qf6%2B99ZPKO1ApGWug4ufK%2BCOdyCfXfBAdE5XIOZ%2FIu824s4QyeaDiMSI5cS7a%2F1%2FZ8pqoyUOlW3n5rb%2FbuvGJBS3RHuO1yyAMIpLVKKt%2FiMO1Wq%2FiEtlrucxyArh9cvqZMf7RqQQDleeHmhmKmWZuU9z8tllivS16hFFoQ%2F6eO65VDqMXwPh6Cb8bogAFf%2BVAr%2FDeiq5fCU6mpbee8MDg93WqsvDR%2Fu83IfksHHUJFeeFGwRKWfwSHmp0wdrqliah4Zzt65ywE%2B%2BJR8K3nKEu9N2RQ1NQTTlvm3G2Irdgnnhi6QRQVKpTVrh9IbdD3WpF7ksFW0lPW6lz9Ft87gbVFF4uVjL3cO3rMA2U%2FhmPT%2B%2Fa34jGqDLkMO2crcQGOqUBZL%2BGi7IDopGrNBqi27qsZbI1nIC0o%2BnxMNrGs5Zs39BvborYjD1lJTZDHMRPU0MLZYGUBeNZpwhcv%2BBxP2DzgciqMSeRvsrPPpIImjicC5Ic9%2FTlOKBsUOJUiZQ2XyKyVvD5bh2zSC1I9WknAuko2dSREbiuPSHWEVTrz9KSuuh1rgIfmuLzBXc1%2F1LQwvRSyhS27OcrbBxYtP48obRyXzshGj2y&X-Amz-Signature=4a7a781cfa6fdde9db1e9984e91aa3f532259e9f05fd1994d8402f993887a824&X-Amz-SignedHeaders=host&x-amz-checksum-mode=ENABLED&x-id=GetObject)